Conversion Optimization with A/B Testing

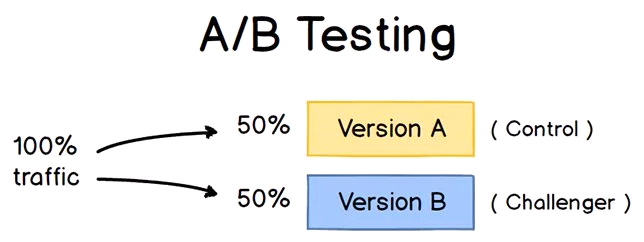

At Grio, we offer a wide range of services focused on helping our clients optimize their web presence. One such service is A/B testing. A/B testing, also known as split testing, is a marketing experiment that compares two versions of your content by “splitting” your audience and analyzing which variation performs best. In other words, you create a variant of your content, then show version A to one half of your audience and version B to the other half and analyze the results.

A/B testing takes the guesswork out of website and application optimization and enables you to make data-driven decisions. In this blog I’ll talk about the challenges, benefits, and pitfalls of A/B testing, along with my top recommendations for A/B testing tools.

The Benefits of A/B Testing

A/B testing is an invaluable tool for businesses that is low in cost but high in reward. It allows marketing teams to understand what simple changes, such as minor adjustments to words, phrases, images, or videos, have the largest impacts on consumer metrics. Some of the most noteworthy benefits of A/B testing include:

- Increased Traffic: Nearly all businesses rely on traffic to generate new customers. Therefore, making small changes to increase web traffic can have tangible results.

- Higher Conversion Rate: A conversion is when visitors or users take action (purchase a product, fill out a form, use a resource, etc.). A higher conversion rate means that a greater percentage of the people entering your site are using it. By making small changes to your calls to action (CTAs), you can increase the number of conversions happening on your website.

- Lower Bounce Rate: Bounce rate refers to the rate at which visitors leave (or “bounce from”) your app or website. Changing photos, headings, fonts, and introductions can entice visitors to stay on your page longer, thereby increasing their chance of becoming conversions.

- Low Risk/High Reward Modifications: The biggest risk when redesigning a website is losing existing customers and crashing the conversion rate that you were hoping to enhance. A/B testing allows you to make small modifications in a strategic manner to ensure that you don’t negatively influence your current consumer metrics.

- Statistically Significant Improvements: Any data that can take your website modification proposals from “I think” to “I know” is worth the effort. Creating data-driven, specific alterations will reduce your workload and provide your business with the comfort of a well-researched plan.

A/B Test Procedure

A/B tests are scientific experiments. As such, it is best to follow a set procedure when performing your tests to minimize the influence of outside variables. I recommend the following procedure:

- Pick One Variable to Test: A/B tests are designed to analyze the results of one change at a time. Pick an element to change that will likely make a difference in your conversion. Impactful elements typically include headlines, CTAs, colors, layout, or timing.

- Identify Your Goal: Ensure that you have a specific goal in mind for your test. What metric are you hoping to improve? Conversion rates? Sales? Total time visitors spend on your page? It’s best to focus on a single metric at a time to get cleaner data from your testing.

- Create a “Control” and a “Challenger”: Your control will be your existing content, while your challenger will be the altered content. When creating a challenger, make sure that you have a clear concept of why you’re changing the element you choose. If you create a challenger with a specific hypothesis in mind (i.e.: I believe that the color red will be more noticeable and therefore increase the number of visitors that click the CTA), it will make it easier to understand and implement the results down the line.

- Choose an A/B Testing Tool: A/B testing tools will help you run your A/B test and analyze your results. A poor A/B testing tool has the potential to slow down your content or constrict the types of variations you can run. My favorite A/B testing tools are Sitespect, Optimizely, VWO, and Scandiweb, as discussed below.

- Design Your Test: With the help of an A/B testing tool, designing your test is easy. When you create your test, make sure you do the following:

- Split your sample groups equally and randomly. Most A/B testing tools will do this automatically.

- Determine your sample size (if applicable). Sample size will need to be determined in situations where only specific individuals are targeted (i.e. testing an email). When testing content in a space where sample size is not controlled (e.g. a website), it is important to alter the duration of your test to ensure a proper sample size is achieved.

- Decide how significant you need your results to be. Establishing thresholds for statistical significance and confidence levels will help expedite the analysis process down the road. As a rule of thumb with A/B testing, the more significant the change is, the less confidence you need.

- Accumulate Data: This is the easiest step by far. Allow the A/B test to run long enough to get significant results. Though the duration of the test is situation-specific, the average A/B test runs for several weeks.

- Analyze the Results: Though most A/B testing tools will return results for multiple metrics, make sure you are keeping your primary goal in mind as you do your analysis. Determine which variation performed best, and then determine whether or not your results are statistically significant. If one variation is statistically better than the other, you have a winner!

- Plan Your Next A/B Test: A/B testing is an iterative process. Each test, whether statistically-significant or not, can provide valuable insight for your subsequent tests. As such, the more iteration you perform, the more successful your website optimization will be.

Pitfalls of the A/B Test

A/B testing can be a time-intensive process. As such, it’s important to make sure that you aren’t committing any mistakes that could affect your data. Some of the biggest pitfalls that people encounter include the following:

- Running Multiple Tests at Once: Running multiple tests at once, even if they are in different locations, can muddy your results. If you want clear, understandable results, it is important to only make one change at a time. If you want to make multiple changes, consider running multivariate testing (MVT) or multi-page testing.

- Picking Random Variables: Make sure you have a valid hypothesis before running your tests. If you simply make changes for the sake of making changes, without a valid reason for doing so, you will have a hard time building your results into a usable long term strategy.

- Choosing the Wrong Duration: Choosing the wrong duration or sample size for your test can negatively influence your results. When performing your test, ensure that your duration is long enough to mitigate result convergence (when there appears to be a significant difference at first, but the difference decreases over time), but not so long that you begin to collect unneeded data.

- Using an A/B Test as a Standalone: A/B testing works best as an iterative process. Each test, whether successful for not, provides valuable insights into what changes influence optimization. Therefore, it’s important to make sure you don’t quit after the first test if it doesn’t give you the results you were hoping for.

Best Platforms for A/B Testing

There are dozens of different A/B testing tools out there. While many will provide you with the information you need, I recommend the following:

- Sitespect: Sitespect sets itself apart by being one of the first and best server-side testing solutions on the market. While other A/B testing tools rely on tag-based solutions, Sitespect edits the HTML before it leaves the server. I prefer server-side testing when possible because it eliminates many of the tag-based issues and increases client security.

- Optimizely: Optimizely was created as an experimentation platform for enterprise marketing, product, and engineering teams. Optimizely is one of the leading conversion rate optimization platforms on the market because it offers a wide range of testing, meaning you won’t outgrow the platform as your optimization strategies grow. I like using Optimizely because it is a versatile tool that is straightforward and easy to implement.

- VWO: VWO is an A/B testing and conversion rate optimization platform that is tailored for enterprise brands. In addition to A/B testing, VWO also offers split URL tests and multivariate tests. They are at the top of my list of A/B testing tools because of their comprehensive reporting dashboard. VWO uses Bayesian statistics within their SmartStats feature to give you more accurate conclusions and help you increase efficiency within your testing.

- Scandiweb: Scandiweb is an integrated service provider with the largest eCommerce development team in Europe and the United States. As such, they offer a wide range of testing options outside of A/B tests.

A/B testing is a simple, efficient way to help maximize your website’s consumer metrics, sales, and conversion rates. A/B testing is one of the many techniques used by Grio to help clients elevate and optimize their web presence. For more information, see the full list of services we offer.