The Internet of Things: Future Trends and UX Considerations

What does the IoT look like in 5, 10, or 25 years?

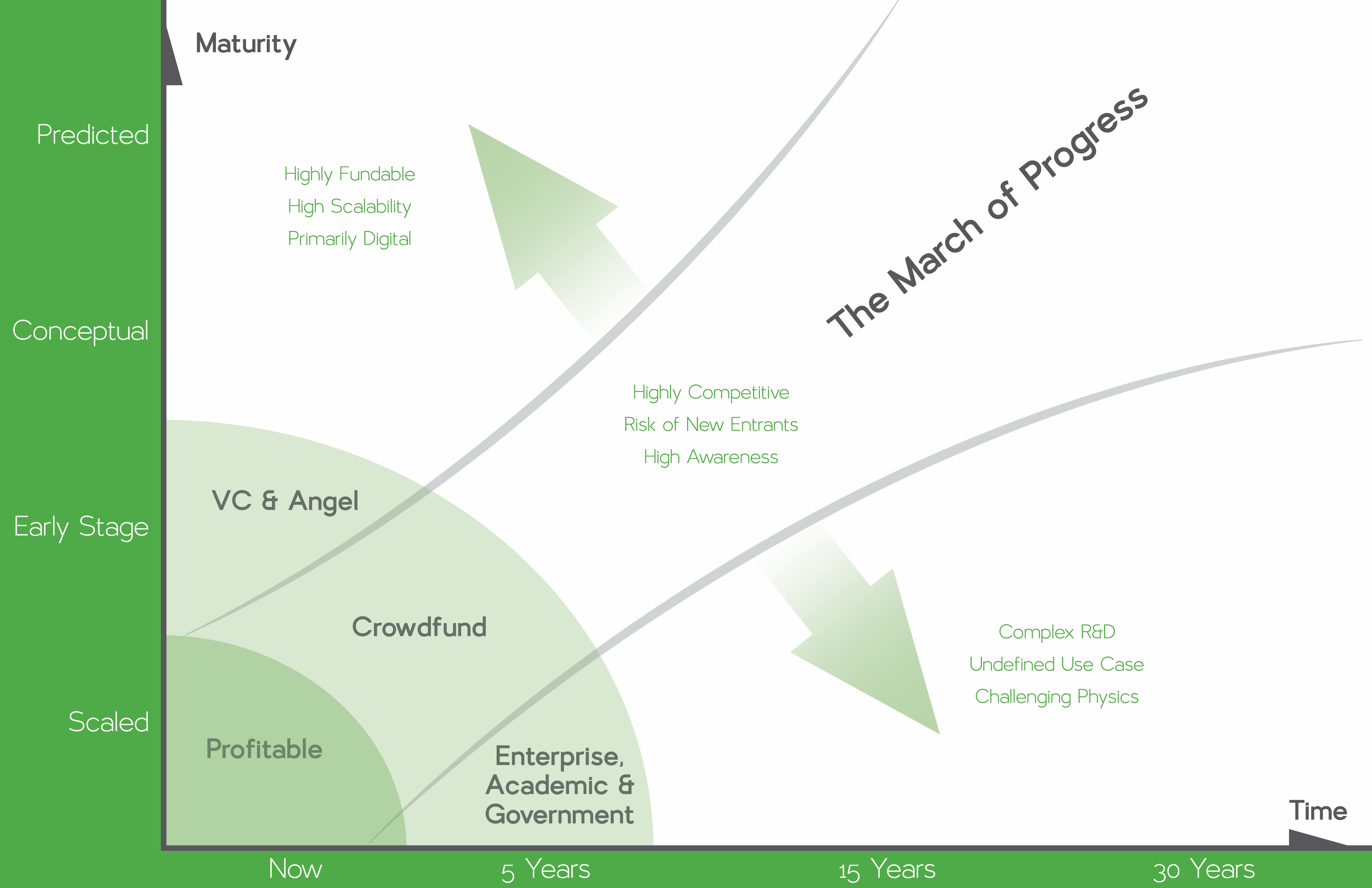

We’re living in a very exciting time for developments in technology, and there are always new stories of huge funding rounds going to companies bringing us closer to the future we’ve all imagined. Looking at the graphic below we can begin to make some sense of the different forces driving these technologies. Why do certain startups land billion dollar rounds while others slowly emerge out of academic labs? Why do some emerging technologies mature rapidly, while other languish? What differentiates a breakout crowdfunding campaign from a VC darling?

The following charts are the result of researching nearly 400 emerging and enabling technologies related to the Internet of Things (IoT) through analysis of news, scientific papers, patents, and interviews with thought leaders. By connecting the dots across these enabling technologies we can begin to estimate when we may see certain solutions emerge, and who will be supporting their development. With this tool we can also identify key enablers that may open up opportunity for investment, or fields that are acting as a bottleneck.

The IoT takes many forms and goes by many names, but one of the most buzzworthy areas of exploration is around the smart home, where big players like Nest have made the concept a familiar term. Currently there is a free-for-all of platforms and software languages that are all fighting for market dominance, much like the days before the USB standard. This walled garden approach is a primary factor holding back the experience, and therefore demand, of the smart home.

As the experiments of today grow into the technologies of tomorrow, we are already beginning to see the notion of digital coexistence with combinations of video conferencing, telepresence robots, and haptic feedback in wearables. Another major enabling technology on our horizon today is based around ambient power sources, from rectennas pulling power out of wi-fi, to piezoelectric devices using vibration and air flow to power their functions. It is often said that innovation is not the creation of something new, but the combination of several existing technologies in new ways. What constellation of hardware, software, and data will bring about the next iPhone?

The largest industries in the world encompass the care for our physical wellbeing: the food we eat and the healthcare we receive. Successful players such as Fitbit, and emerging innovators in synthetic biology, are on the cusp of an explosive period of growth in this space. The data being collected about our habits of today will lead to the algorithms that drive diagnosis and treatment tomorrow. In the not-so-distant future, we can expect that we will experience more predictive health treatment than reactive doctor visits. We will monitor and treat ourselves with connected devices in our homes, on our bodies, and ultimately inside of our GI tract.

While software may be eating the world, the world is still a predominantly physical place. Our food, our clothes, our medicine, and our homes are all inherently in touched by logistics. Many cities are currently awash with startups offering meals, labor, clothes, and transportation on demand. Many of these services are merely the research arm for an automated world; it is a form of human-in-the-loop computing. Uber uses drivers to learn how people move in urban areas; Munchery and Blue Apron are building data sets that can influence agriculture and healthcare on a grand scale; Stitch Fix learns and sets trends with a complex variation of A/B testing.

Ultimately these services will give way to autonomous cars, automated farming, and automated clothing recommendation. In time, certain functions will be disaggregated from their industrial-age systems of farms and factories, and brought into the home. While this may sound like a Jetson’s flight of fancy, the groundwork for these experiences is being laid with the combination of data and automated manufacturing, as well as synthetic biology. Remember, the greatest innovations are made from a group of existing technologies combined in a new way.

How does user experience change as we shift away from screens?

Using Jacob Nielsen’s 10 Heuristics for Interaction Design as a framework, let’s explore some of the questions UI and UX designers may be asking in the years to come.

Visibility of system status – The system should always keep users informed about what is going on, through appropriate feedback within reasonable time.

Immediate audible responses and actions are great, but what about invisible actions?

Is your Dropcam off because of a power outage, wifi drop, or burglar?

Match between system and the real world – The system should speak the users’ language, with words, phrases and concepts familiar to the user.

“Ok Google”, “Hey Siri”, and “Alexa” are easy to use; what happens when calls to action are as crowded as the .com space?

User control and freedom – Users often choose system functions by mistake; support undo and redo.

CTRL-Z is a knee-jerk reaction by many computer users. Apple has proposed the side-to-side shake for a physical gesture, what is the verbal command?

How do you extract the “undo action” from conversation?

How do you process idiomatic expressions like “Yeah, no…”?

Consistency and standards – Users should not have to wonder whether different words, situations, or actions mean the same thing.

How do you cut through the noise?

Can a company “own” a phrase or call to action?

How do you differentiate “send via ship” and “send via Shyp”?

Error prevention – Either eliminate error-prone conditions or check for them and present users with a confirmation option before they commit to the action.

How can we balance the annoying with the essential?

A command to order more tissues won’t break the bank, but an inebriated “book me a flight” might be trouble…

Recognition rather than recall – Minimize the user’s memory load by making objects, actions, and options visible. The user should not have to remember information from one part of the dialogue to another. Instructions for use of the system should be visible or easily retrievable whenever appropriate.

Many of our interactions will be handled without GUI. For many IoT applications this is fine, but what about other devices?

Do they ping you with a status update?

Do they manifest on another device?

Flexibility and efficiency of use – Accelerators — unseen by the novice user — may often speed up the interaction for the expert user such that the system can cater to both inexperienced and experienced users. Allow users to tailor frequent actions.

What are the verbal shortcuts for the future?

Do we carry over existing HCI conventions? How can we develop for existing human behavior?

Saying “control z” sounds laughable, yet saying “brb” has become acceptable…

Aesthetic and minimalist design – Dialogues should not contain information which is irrelevant or rarely needed.

How can we leverage data streams to surprise and delight users with the right behaviors?

Not everything must be requested by the user, but be careful what you assume…

Help users recognize, diagnose, and recover from errors – Error messages should be expressed in plain language that indicates the problem and constructively suggests a solution.

Do you ask for the user to repeat themselves?

Do you propose a few options?

Do you pull up a search on their smart TV or mobile device?

Help and documentation – It may be necessary to provide help and documentation. Any such information should be easy to search, focused on the user’s task, list concrete steps to be carried out, and not be too large.

For the foreseeable future, the web and mobile GUI will be the home of documentation… What happens when we move beyond this convention? AR? Better voice?

Conclusions

Looking through the trends and considerations we’ve explored, there are a few major themes that can be extracted from this topic.

- Users want human connection and less screen time.

Advantages: Our minds are optimized for multi-channel audio and our voices are fine tuned for expression

Risks: Accurate interpretation of wide range of voices, accents, speech patterns and languages

Lessons: Avoid the uncanny valley

- Voice control is a (weak) substitute for a GUI in the long run

Advantages: Much less power usage and more flexible form factors

Risks: Users trust is fragile, switching costs are low – Samsung TV

Lessons: Balance convenience with “big brother”

- The digital world around is will change as we pass through thresholds

Advantages: Automated convenience: devices will ready themselves as we arrive. Reduced effort allows for greater use of our time whether it is productive, social or restful.

Risks: False positives can trigger unpleasant events. Behavior will be nudged by devices, run by algorithms, and developed by business with an unseen intent.

Lessons: Set defaults carefully and learn behavior patterns. Allow settings to be fine tuned without exhausting user with on boarding.

- There will be sensors everywhere around us, on us, and eventually in us.

Advantages: Realtime data-driven control of our world; complete awareness of changes in state for health, security, location, maintenance and uptime

Risks: Privacy will be redefined when all data is sent to cloud Large networks with many access points to secure

Lessons: There is an art to deciding which sensor to listen to and how to group their input; accurate sensors do not necessarily equate to appropriate actions.

- Control will be distributed to many sources

Advantages: Quick reflexive actions, brand loyalty, custom environments and moods

Risks: Complex conditional relationships across sensors; possible mixed signals across recipes, apps, and device.

Lessons: Offer users feedback through confirmation through multiple mechanisms. Design to work with more devices; use a less siloed approach to connectivity